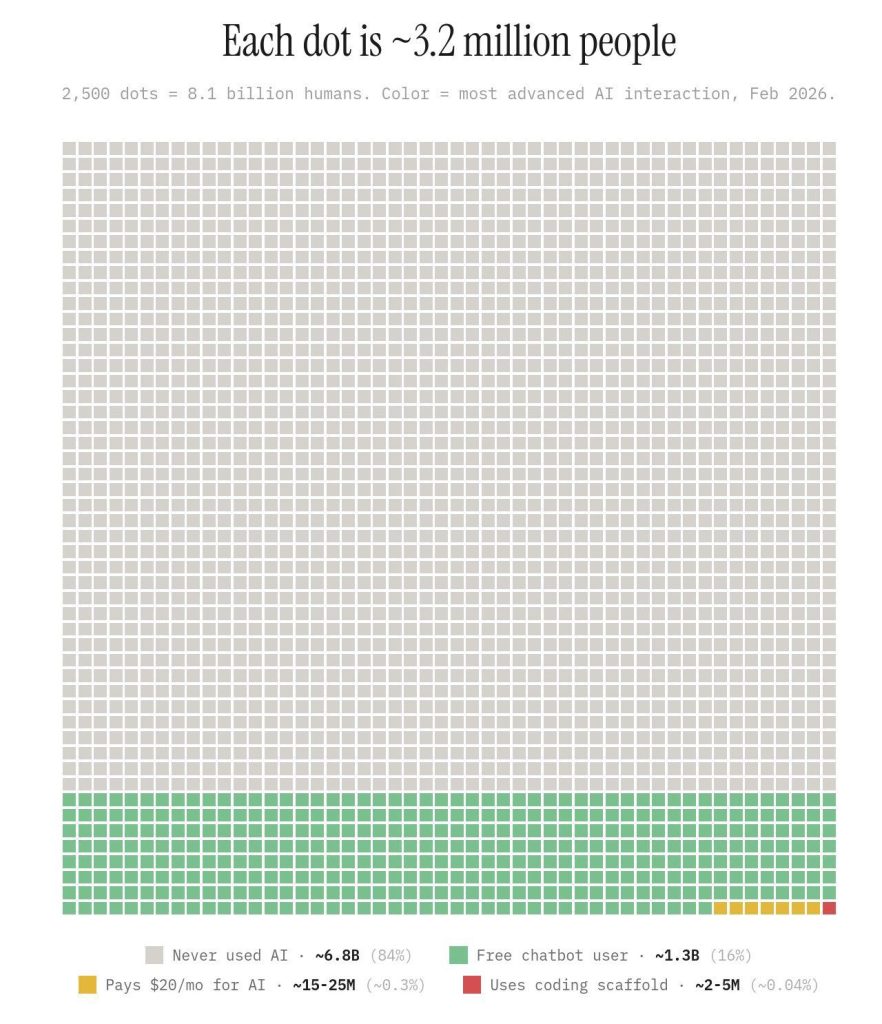

If you’re reading this on a screen, there’s a decent chance you’re not in the grey. According to recent estimates, 84% of the world’s population, roughly 6.8 billion people, has never intentionally used generative AI. Not “doesn’t use it regularly.” Never. Not once. For those of us building careers, conversations, and creative workflows around tools like ChatGPT, Midjourney, or Claude, this statistic lands like a splash of cold water. Our group chats buzz with prompts and outputs. Our colleagues trade tips on the latest models. It’s easy to forget that we’re not the center of the universe, we’re just living in a particularly loud and well-lit bubble.

This gap matters because generative AI isn’t just another piece of software. It’s a tool for thinking itself, a reasoning partner, a creativity amplifier, a productivity multiplier. The difference between using it and not using it isn’t merely efficiency; it’s cognitive. Someone wrestling with an AI to refine an argument or brainstorm a solution is learning to ask better questions, structure thoughts more clearly, and prototype ideas at a pace previously reserved for the exceptionally gifted or well-resourced. And because the learning happens through iteration, the gap compounds daily.

The 0.04% building with AI aren’t just ahead; they’re shaping the terrain that everyone else will eventually have to navigate.

But here’s where the story forks. It would be easy to slide into determinism, to see this as the next great class divide, hardwired by market forces and existing privilege. Yet the outcome is far from fixed. The tools themselves are becoming cheaper, more intuitive, and more accessible with each passing quarter. The interface is shifting from arcane prompt engineering to natural conversation, voice, and ambient assistance. This gravitational pull will inevitably draw millions from the grey into the green, simply by lowering the friction of that first intentional interaction. Technology has a habit of democratizing itself, even if it never does so evenly or quickly enough.

The real lever, though, isn’t the technology, it’s literacy. AI literacy isn’t about learning to code or understanding transformer architectures. It’s about knowing what these models do well (synthesis, drafting, ideation) and where they stumble (hallucination, bias, genuine understanding). It’s about developing the instinct to reach for AI when it’s useful and set it aside when it’s not. That is the core of our Technology Transcedent focus, to get you there quickly and on the right track throughout, so you can optimise its value. This kind of literacy can be taught. It can be embedded in schools, community centres, and workplace training. It can spread through the very networks that currently define our bubbles if we’re intentional about widening the on-ramp rather than just marveling at how far ahead we’ve gotten.

So no, the gap between the 84% and the 0.04% isn’t destiny. It’s a snapshot of an early adopter curve, not a permanent caste system. But it is a responsibility. For those of us already engaging with these tools, the question isn’t whether we’re ahead or behind. It’s whether we’ll use our position to build bridges or simply enjoy the view. The outcome is being written now, in the choices we make about who gets invited into the conversation, and whether, when they arrive, they find a tool that empowers them or a gate that excludes them.