Something has quietly fractured in the machinery of modern marketing. The past year has seen an unprecedented surge in content production, much of it fuelled by generative AI tools that promise limitless scale at marginal cost. Yet the anticipated uplift in engagement has not materialised. Instead, a growing body of research suggests that audiences are becoming not only more discerning, but more sceptical. Surveys indicate that roughly half of consumers can now identify AI-generated content with reasonable accuracy; when they do, their behaviour shifts measurably. More than half report a decline in engagement with such material, signalling not merely a preference but an erosion of trust that increasingly carries commercial consequences.

The dilemma is compounded by what might be termed a transparency paradox. Disclosure of AI involvement, which we once assumed would remedy scepticism, produces mixed outcomes. On one hand, labelling content as AI-generated can increase perceptions of honesty and even corporate integrity. On the other, it reinforces a psychological distance between brand and audience. Baseline sentiment towards AI remains markedly lower among consumers than among marketers, revealing a persistent perception gap. While advertisers emphasise efficiency and scalability, audiences appear to interpret machine-generated messaging as impersonal, even evasive. In this context, transparency satisfies regulatory and ethical expectations, but does little to restore the emotional resonance that underpins effective communication.

Search dynamics reinforce this divergence. Google has increasingly prioritised signals aligned with experience, expertise, authority and trust, criteria that AI-generated content, in its unrefined form, often struggles to meet. Studies suggest that such material tends to underperform in ranking metrics unless augmented by substantive human oversight. Consumer perception mirrors these algorithmic biases. Research by NielsenIQ indicates that even technically competent AI-generated advertisements frequently fail to impress, with audiences describing them as formulaic or emotionally flat. Across social platforms, awareness of AI authorship correlates with reduced trust and diminished interaction, reinforcing the notion that scale alone cannot substitute for authenticity.

The challenge becomes more acute when brands attempt to deploy AI in emotionally charged contexts. Marketing has long relied on the simulation of intimacy, storytelling that evokes empathy, aspiration or belonging. When people perceive such narratives as originating from machines, the effect can invert. Academic work published in the Journal of Business Research describes an “AI-authorship effect”, whereby consumers experience discomfort or even moral aversion when emotional messaging is attributed to non-human agents. The implication is not merely that AI struggles to replicate human feeling, but that audiences actively resist the notion that it should. Authenticity, in this sense, is not just a stylistic attribute but a perceived origin.

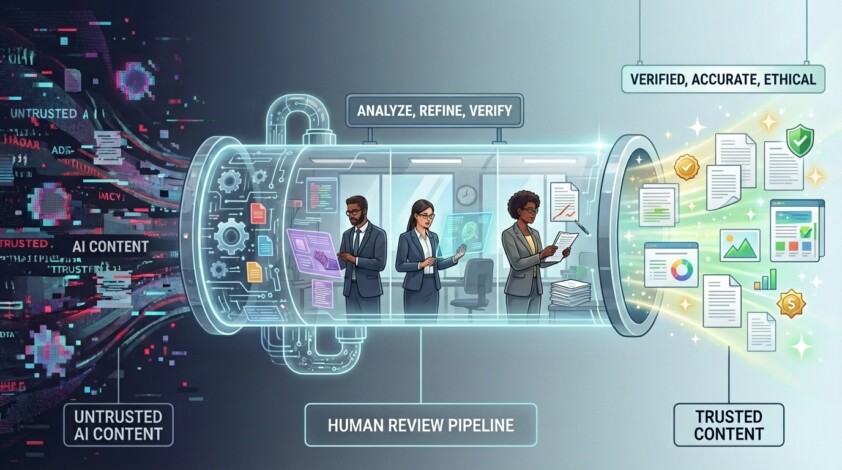

Against this backdrop, the emerging consensus is that the solution lies not in abandoning AI but in reframing its role. The most effective organisations are those that adopt hybrid models in which human expertise systematically refines, validates, and contextualises machine-generated outputs. Evidence suggests that content produced through such workflows significantly outperforms fully automated alternatives, both in engagement and in search visibility. The difference is not incremental but multiplicative, reflecting the complementary strengths of computational scale and human judgement.

A new generation of platforms is attempting to industrialise this hybrid approach. Among them, SmythOS exemplifies the shift from isolated tools to orchestrated systems. Rather than treating AI as a monolithic solution, such platforms decompose content production into modular processes: generation, evaluation, refinement and verification. Techniques such as retrieval-augmented generation, multi-agent validation, and human-in-the-loop review embed directly into workflows, enabling organisations to scale output without relinquishing control. The emphasis focuses less on producing more content and more on ensuring that what is produced remains accurate, coherent, and aligned with brand intent.

This architectural shift addresses a critical gap in many current implementations: the absence of systematic quality control. Companies have shown that they can automate creation well, but they are not as good at automating verification. As a result, errors, whether factual inaccuracies or tonal misjudgments, propagate quickly across channels. By contrast, orchestrated systems introduce layers of accountability, from real-time fact-checking against proprietary data sources to audit trails that document how each piece of content evolves. Such transparency is not merely operationally useful; it is increasingly essential in an environment where regulatory scrutiny and consumer expectations are converging.

Yet even as tooling matures, the broader lesson remains consistent with earlier cycles of technological adoption. The failure of many AI-driven marketing initiatives stems from a category error: treating the technology as a replacement for human creativity rather than as an amplifier of it. Scaling output without scaling discernment inevitably dilutes quality. The organisations beginning to close this gap are those that recognise content not as a volume problem but as a cognition problem, one that requires the integration of machine efficiency with human sensibility.

It is within this context that a parallel ecosystem of advisory and innovation firms is gaining prominence. Technology Transcendents, for instance, advocates a model centred on cognitive augmentation rather than substitution. Their approach focuses on redesigning marketing and content systems so that AI operates as an extension of human insight, not a proxy for it. This involves embedding governance, interpretability and domain expertise into every stage of the workflow, ensuring that machine-generated outputs remain accountable to human intent.

Such firms argue that the future of marketing effectiveness will depend less on the sophistication of generative models and more on the coherence of the systems in which they are deployed. In practice, this means aligning data, technology and talent around a unified objective: preserving authenticity at scale. The combination of human cognition and machine capability becomes not a compromise, but a multiplier, one that restores trust while retaining the efficiencies that made AI attractive in the first place.

The trajectory of the industry suggests that the era of indiscriminate content generation is drawing to a close. What replaces it is likely to be more disciplined, more transparent and, paradoxically, more human. In an environment saturated with synthetic messaging, authenticity becomes scarce, and therefore valuable. The firms that succeed will not be those that produce the most content but those that can ensure it still feels as though it was meant for someone.